I.INTRODUCTION

In contemporary society, individuals are increasingly exposed to a range of health risks that may result in varying degrees of paralysis, significantly affecting their daily functioning and independence [1]. According to statistics provided by the Christopher and Dana Reeve Foundation, strokes are the leading cause, accounting for approximately 30% of paralysis cases. This is followed by spinal cord injuries at 23% and multiple sclerosis at 17% [2]. The remaining cases are attributed to neurological and traumatic conditions such as cerebral palsy and traumatic brain injuries [3]. In Vietnam, data reported in Circular 22/2019/TT-BYT show that among individuals suffering from unilateral hand paralysis, approximately 51–55% experience severe impairment, 36–40% are classified as having moderate dysfunction, and 21–25% display mild symptoms [4]. These figures highlight the pressing need for effective rehabilitation technologies and assistive devices to improve patients’ quality of life and restore independence in daily activities, reduce caregiver burden, promote long-term functional recovery, and enable reintegration into social and occupational environments [5].

In recent years, increasing attention has been directed toward the development of wearable robotic devices, particularly robotic gloves, designed to assist hand movement in individuals with neurological impairments [6]. Such devices are especially relevant for patients recovering from strokes, spinal cord injuries, or neurodegenerative diseases, where upper-limb rehabilitation is crucial yet often challenging [7]. A growing body of research has focused on utilizing electromyographic (EMG) signals to control actuation mechanisms, such as servo motors [8] and cable-driven pulleys [9], to facilitate complex finger flexion and extension. For instance, the study in [10] presents a soft robotic glove targeting the middle and ring fingers, providing supplementary assistive force through a novel pulling-ring mechanism. Although the design contributes to improving finger grip strength, it is limited in scope, offering support to only two fingers and lacking a comprehensive assessment of object manipulation capabilities in real-world scenarios. Additional studies [10–13] have prioritized the development of lightweight, affordable, and low-power robotic gloves that aim to restore hand mobility while minimizing discomfort. These designs are often tailored to be user-friendly, encouraging long-term use in home-based rehabilitation settings. Meanwhile, [14] introduces a real-time muscle activity detection algorithm that enables dynamic control of a pneumatically actuated soft glove, targeting users with impaired grasping ability. Despite these advances, a common shortcoming across the literature is the limited integration of complementary sensing modalities such as visual feedback.

Notably, only a limited number of existing rehabilitation glove systems incorporate advanced image processing and computer vision techniques to enhance the device’s contextual understanding of hand–object interactions, which represents a significant opportunity to improve both the functionality and adaptability of assistive gloves [15]. By integrating real-time visual data, the system can dynamically recognize and interpret critical factors such as the shape, size, orientation, and spatial position of objects within the user’s environment, enabling the glove to adjust its actuation patterns accordingly [16]. This allows for more precise, task-specific assistance—for example, modulating grip strength or finger positioning to safely handle fragile, bulky, or irregularly shaped items—thereby promoting safer and more effective rehabilitation exercises and facilitating the transfer of training gains to daily living activities. Despite these clear benefits, to the best of our knowledge, studies referenced in [9–14] have not yet explored or proposed comprehensive algorithms that leverage image or video data to inform glove actuation in real time. Current research tends to focus primarily on mechanical design, motion capture sensors, or muscle activity monitoring without fully exploiting the rich contextual information that computer vision can provide. The development of novel vision-based control strategies, potentially incorporating deep learning methods for object detection and hand pose estimation, could revolutionize glove-assisted rehabilitation by enabling context-aware and intelligent actuation, as well as facilitating closed-loop feedback systems that dynamically adapt to user performance and environmental changes, thereby increasing the overall efficacy and safety of therapy. Therefore, integrating sophisticated image processing algorithms with glove control systems stands as a promising and largely untapped direction for advancing smart rehabilitation technologies.

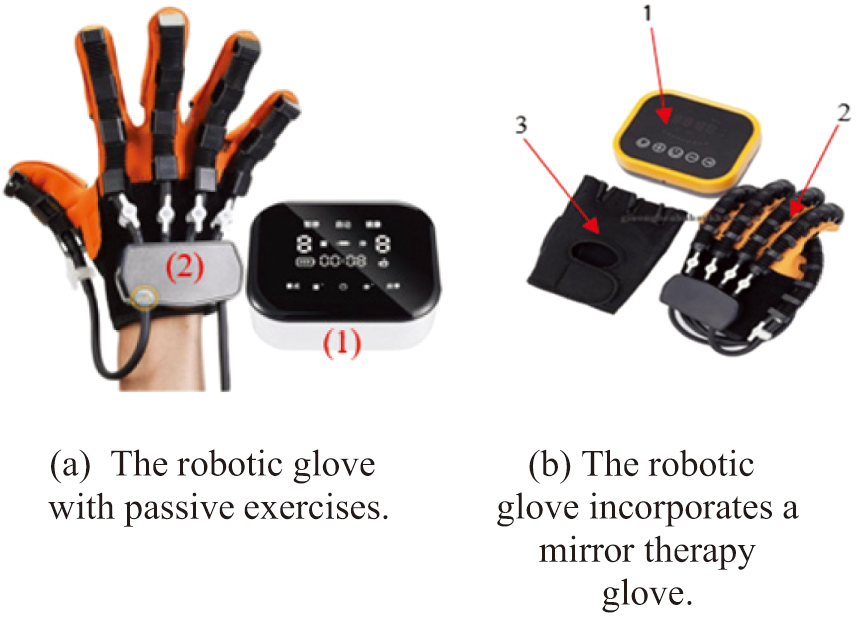

Various types of robotic rehabilitation gloves are currently available on the market, as shown in Fig. 1a and 1b. Fig. 1a depicts a glove with a relatively simple structure that operates on a preset timing mechanism. Its main components include:

- 1.Control box

- 2.Rehabilitation glove

Fig. 1. Types of robotic gloves available on the market. a) The robotic glove with passive exercises. b) The robotic glove incorporates a mirror therapy glove.

Fig. 1. Types of robotic gloves available on the market. a) The robotic glove with passive exercises. b) The robotic glove incorporates a mirror therapy glove.

In contrast, Fig. 1b presents a more advanced design, which includes:

- 1.Control box

- 2.Rehabilitation glove

- 3.Mirror therapy glove

These commercially available products are specifically designed for two types of rehabilitation exercises:

- 1.Passive training

- 2.Mirror therapy training

Passive training (Fig. 1a)

- •operates based on a pneumatic-driven mechanism, in which air is cyclically inflated into and deflated from internal air chambers embedded within the rehabilitation glove. This process is precisely controlled by an external control box, which integrates an air pump, pressure regulation valves, and programmable control circuits. During operation, the control box delivers compressed air to specific sections of the glove, causing the fingers to bend (flexion) as the air chambers expand. Subsequently, the release or redirection of air pressure allows the chambers to deflate, passively returning the fingers to an extended position.

- •is primarily designed for patients who experience complete or near-complete motor paralysis in the upper limbs, particularly those who are unable to initiate voluntary hand or finger movements due to severe neurological or musculoskeletal impairments. This includes individuals in the early stages of stroke recovery, as well as patients with spinal cord injuries, traumatic brain injuries, or advanced stages of neurodegenerative diseases such as amyotrophic lateral sclerosis (ALS).

Mirror therapy training (Fig. 1b)

- •is an advanced rehabilitative approach that integrates passive training with visual-motor stimulation through mirror therapy exercises, offering a synergistic method to promote motor recovery in patients with upper limb impairments. This combined modality is particularly effective for individuals recovering from stroke, hemiplegia, or unilateral limb dysfunction, where one hand remains functional while the other is paralyzed or significantly weakened.

- •utilizes Bluetooth wireless communication technology to achieve real-time synchronization between the rehabilitation glove worn on the affected (paretic) hand and the mirror therapy glove worn on the unaffected (healthy) hand. This wireless synchronization enables the two gloves to operate in concert, allowing movements performed by the healthy hand to be mirrored by the impaired hand through passive mechanical actuation.

- •empowers patients who retain functional movement in one hand to independently and intuitively control the movements of their affected, impaired hand. By leveraging the natural motor commands generated when moving the healthy hand, the system translates these voluntary motions into corresponding passive or assisted movements on the affected side through a synchronized rehabilitation glove. This bilateral coordination enables patients to engage actively in their own rehabilitation process, even if their impaired hand lacks voluntary motor function.

Classification of patients with hand paralysis can be categorized as follows:

- 1.Patients with paralysis in both hands—Require full external assistance (use Fig. 1a).

- 2.Patients with paralysis in one hand—Can engage in self-rehabilitation using mirror therapy (use Fig. 1b).

The selection of a robotic glove should be customized to the patient’s condition. Specifically, patients in Category 1 are best suited for the model shown in Fig. 1a, whereas those in Category 2 should use the model illustrated in Fig. 1b.

However, in real-world cases, paralysis often progresses through various stages, which are typically classified as follows:

- 1.Patients with bilateral paralysis.

- 2.Patients with unilateral paralysis.

- 3.Patients with unilateral paralysis but with approximately 70–80% recovery.

This study focuses on patient Categories 2 and 3, with rehabilitation exercises tailored to the specific needs of each group. The authors propose integrating image processing technology to support two types of exercises:

- 1.Mirror therapy exercises, where the movements of the healthy hand are mirrored onto the paralyzed hand.

- 2.Target-based exercises, designed to enhance motor control and functional recovery.

Existing products, such as the device illustrated in Fig. 1b, exhibit several notable limitations that impact their overall usability, patient comfort, and cost-effectiveness. One significant drawback lies in the requirement for patients to wear a specialized mirror therapy glove on their healthy hand. This necessity often leads to discomfort during prolonged therapy sessions, as the glove may restrict natural hand movements, cause sweating, or produce skin irritation due to material properties and fit issues. Such physical constraints can reduce patient compliance and engagement, which are critical for achieving optimal rehabilitation outcomes.

During the technical research and product improvement process, the authors propose employing MediaPipe-based image processing technology for hand recognition.

This method allows for the detection of finger flexion and extension levels through pixel-based tracking, thereby enabling the creation of two distinct rehabilitation exercises:

- 1.Mirror therapy exercises, where movements from the healthy hand are reflected onto the affected hand.

- 2.Target-based exercises, which are particularly beneficial for Category 3 patients, especially younger individuals, by enhancing motivation and engagement during rehabilitation.

By incorporating advanced image processing technology, the authors aim to replace the traditional Bluetooth-based mirror therapy glove with a more sophisticated and intelligent system that facilitates target-based rehabilitation exercises through interactive gaming applications. This innovative approach leverages real-time visual recognition and motion tracking to create a more immersive and personalized therapy experience, allowing patients to engage with virtual objects and scenarios tailored to their specific functional needs. The system is expected to offer patients significantly improved comfort by eliminating the need for cumbersome wearable devices on the healthy hand while enhancing ease of use and accessibility. Moreover, the integration of gamified elements is designed to increase patient motivation and adherence to therapy protocols, ultimately leading to more effective and accelerated upper limb recovery in individuals suffering from hand paralysis. This convergence of image processing and interactive rehabilitation holds great promise for transforming traditional therapeutic paradigms into more engaging, adaptive, and outcome-driven solutions.

Our paper is organized as follows: Section II presents the Hardware Design; Section III describes the Software Design and the Proposed Algorithm for the Robotic Glove; Section IV discusses the Experimental Results; and Section V concludes the paper.

II.HARDWARE DESIGN

The model developed by the authors consists of the following main components:

- 1.Mechanical components

- 2.Electrical components

A.MECHANICAL COMPONENTS

As robotic glove mechanical components are already available on the market, the authors leveraged existing commercial products for experimental purposes. The selected model is the C11 Robotic Glove (Model: SY-HRC11), manufactured in China, as illustrated in Fig. 2 [17].

The structure of this model consists of three main components:

- 1.Glove

- 2.Air tubing

- 3.Soft rubber joints

B.ELECTRICAL COMPONENTS

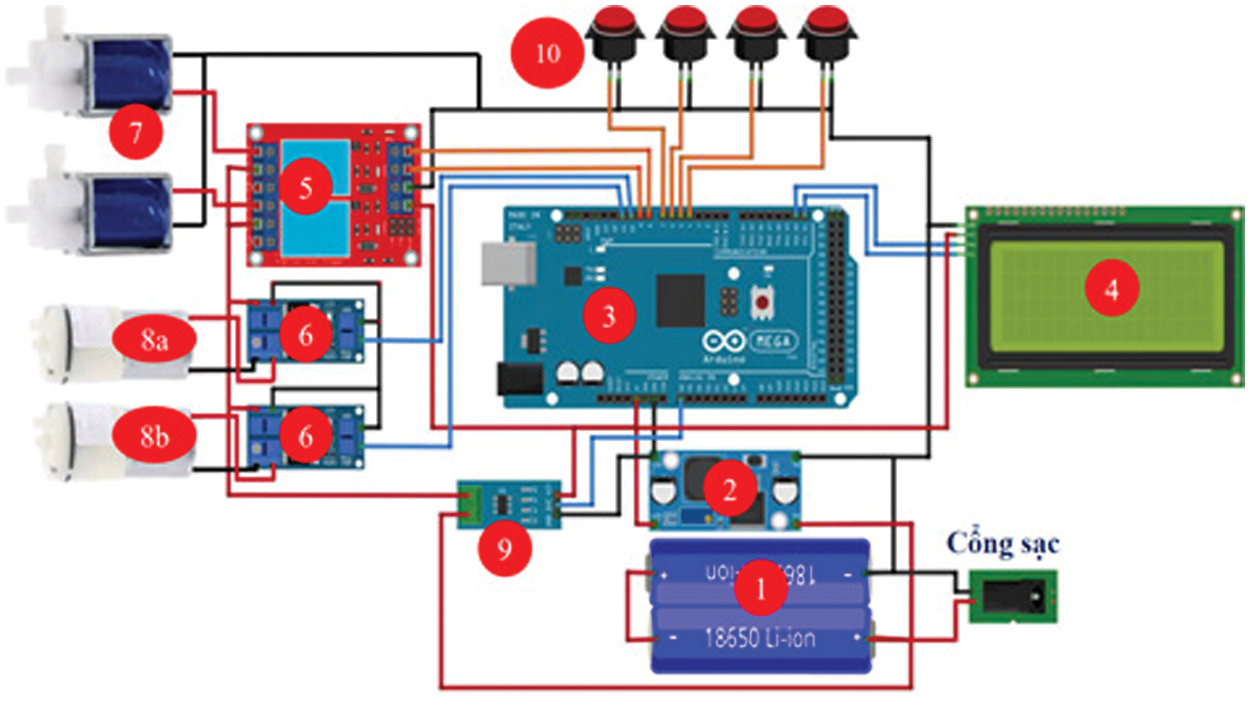

The electrical circuit was independently researched and developed by the authors by purchasing individual components for design and fabrication. It consists of the following devices, as illustrated in Fig. 3 and Fig. 4:

- 1.Lithium battery (7.4 V–2300 mAh).

- 2.LM2596 buck converter step-down module voltage, responsible for reducing the voltage from 7.4V DC to 5V DC to supply power to the central control circuit and peripheral modules such as the LCD display, relay module, and Hall current sensor (ACS712).

- 3.Central processing unit (Arduino Mega 2560), responsible for processing input and output signals from the system’s devices.

- 4.20x4 LCD display, used to show the user’s operating status and selected modes.

- 5.Two-channel relay module, responsible for controlling the opening and closing of the air valves.

- 6.Motor controller module for air pump, using MOSFET PWM (5V-36V, 15A) for efficient motor control.

- 7.Air valve, responsible for retaining or releasing air from the robotic glove.

- 8.Air pump, responsible for suction and compression of air into the robotic glove.

- 9.Hall current sensor (ACS712) for monitoring current flow.

- 10.Push buttons, used for selecting different operating modes for the robotic glove.

Fig. 3. Wiring diagram of the model.

Fig. 3. Wiring diagram of the model.

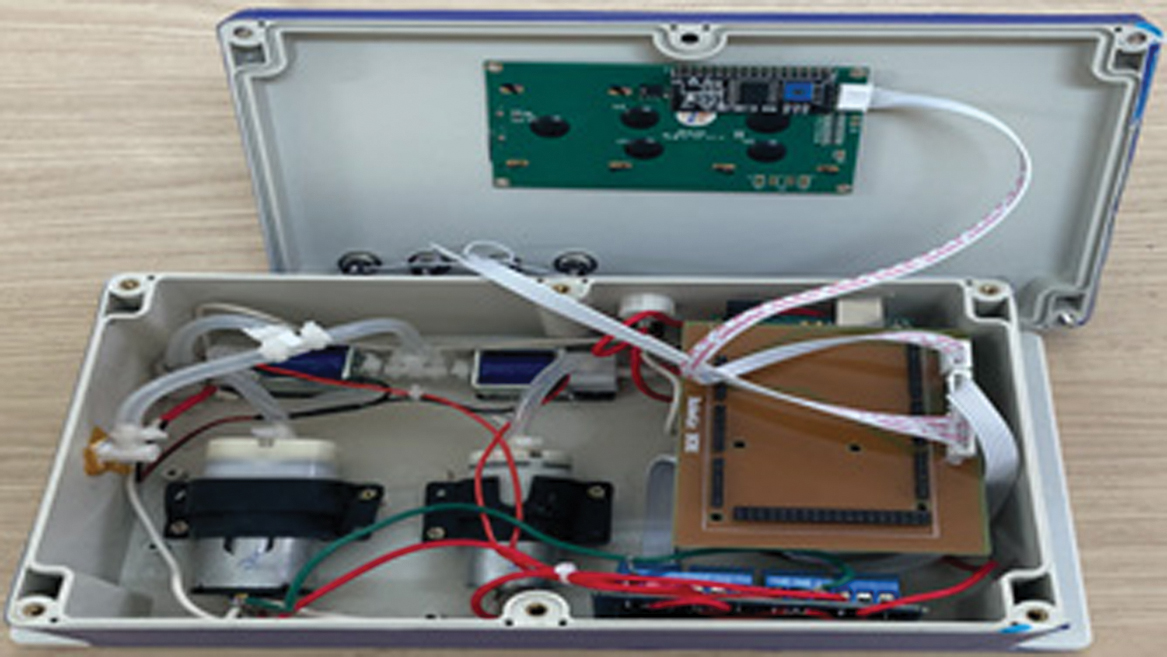

Fig. 4. Internal actual circuit of the model.

Fig. 4. Internal actual circuit of the model.

The wiring diagram of the system is presented in Fig. 3, while the actual circuit layout is depicted in Fig. 4.

As illustrated in Fig. 3 to Fig. 5, the hardware control unit includes four push buttons: Pause/Start, Mode, Menu, and Reset. The functions of these buttons are as follows:

- ▹Pause/Start: Pauses or starts the training session.

- ▹Mode: Selects either the preset-time passive training mode or the mirror therapy mode, where the movement of the healthy hand is mirrored onto the paralyzed hand.

- ▹Stop: Terminates the training session.

Fig. 5. External view of the model.

Fig. 5. External view of the model.

Reset: Restarts the system

The lithium battery supplies a 7.4 V voltage to the LM2596 step-down module, which then outputs a 5 V voltage to power the microcontroller, LCD display, relay coil, current sensor, and push buttons for selecting training modes.

1).PASSIVE TRAINING MODE

If the passive training mode is selected by pressing the Mode button, the microcontroller receives the command and displays the selected mode on the LCD screen. The microcontroller generates pulse width modulation (PWM) signals to control the MOSFET, thereby supplying voltage to the air pump motor (8a). This pump then delivers air into the robotic glove, causing it to contract (finger flexion). To initiate finger extension, the microcontroller sends a command to activate the relay module and generates PWM signals to control the air suction pump (8b). As a result, both air valves open and pump (8b) removes air from the robotic glove tubing, causing the fingers to extend.

2).MIRROR THERAPY MODE

If the mirror therapy mode is selected, the microcontroller receives finger flexion/extension data from the computer, which captures movements from the healthy hand. The microcontroller then processes the data and generates PWM signals to control the MOSFET and relay modules, following the same sequence as in passive training mode to execute the mirrored movements on the paralyzed hand.

III.SOFTWARE DESIGN AND ALGORITHM PROPOSAL FOR THE ROBOTIC GLOVE

A.THEORETICAL BASIS OF DESIGN

1).PROJECTIVE TRANSFORMATION

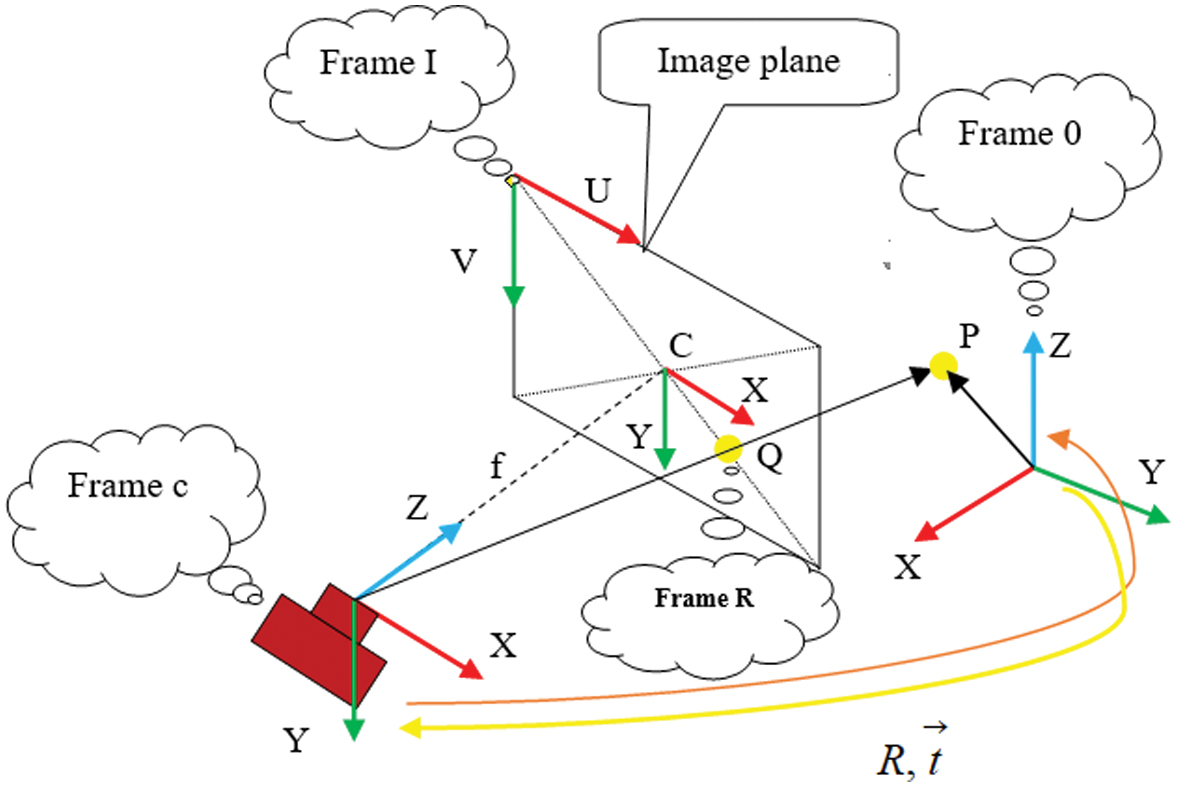

Consider a point P, represented in the C frame as . This vector intersects an image plane at a distance f (focal length) along the Z-axis of the C frame, with the intersection point denoted as Q. The process of determining the image coordinates in the I frame is known as projective transformation, as illustrated in Fig. 6.

Fig. 6. Coordinate transformation and central projection camera model.

Fig. 6. Coordinate transformation and central projection camera model.

The negatives according to the X and Y coordinate equations are and . Here, and represent the width and height of each pixel on the image plane, respectively. The point C denotes the principal point on the image plane, which ideally aligns with the Z-axis of the C frame. Additionally, the parallel frame is referred to as the R frame (retinal frame). The homogeneous coordinates of the projected point Q can be derived using equation (1).

The coordinates of Q in the R frame are and . The homogeneous projection of point Q can be scaled from its physical length to pixel format and then translated from the R frame to the I frame, as expressed in equation (2):

Here, the symbols and refer to the position of point C in pixels within the I frame. The coordinates of the projected point Q in pixel format within the I frame are obtained using and . All these equations can be expressed in the matrix form:

Here, the matrix M is referred to as the camera intrinsic parameter matrix, which is provided by the manufacturer.

2).PERSPECTIVE-N-POINT (pnp)

The hand from the camera feed requires the position and orientation of frame 0 relative to frame c.

Here, R is a 3 × 3 orthogonal matrix, referred to as the rotation matrix, and is a 3 × 1 vector, known as the translation vector.

where Π is the perspective projection model matrix. For a set of points in frame 0 and their corresponding points in frame I, the equation is solved for T.The OpenCV library provides tools to solve problems related to the Perspective-n-Point (PnP) method. This function takes the camera’s intrinsic parameters and object-image point pairs as inputs and returns the transformation matrix T. This matrix describes the estimated pose of the hand relative to the static scene. These concepts are applied to estimate the hand’s pose concerning the camera.

3).MEDIAPIPE IMAGE PROCESSING TECHNOLOGY

MediaPipe image processing technology is a highly accurate and user-friendly library for detecting body gestures. This method has been extensively studied for applications such as hand recognition, human body recognition, and facial recognition.

In the medical field, studies [18–23] have developed algorithms for the automatic detection of fingers and tracking positional changes between image frames. In education, image processing has been applied to the subject of Geography to enhance student engagement by enabling zooming in and out of the Atlas map [24]. This approach facilitates easier map manipulation for teachers and students, allowing them to adjust desired positions without requiring mouse interactions or physical proximity to the touch screen.

Image processing is also used for communication among individuals with hearing impairments and congenital deafness. Sign language applications for these individuals have been addressed in studies [25–28]. In the field of sports, this technology assists athletes, trainees, and instructors in Gym and Yoga training by ensuring proper movements, improving training techniques, and preventing injuries, as discussed in studies [29,30].

In MediaPipe’s finger detection technology, determining various levels of finger flexion and extension is a challenging task, and the existing algorithms in MediaPipe do not yet provide built-in support for this function. This paper proposes a novel algorithm for recognizing and determining different levels of finger flexion and extension.

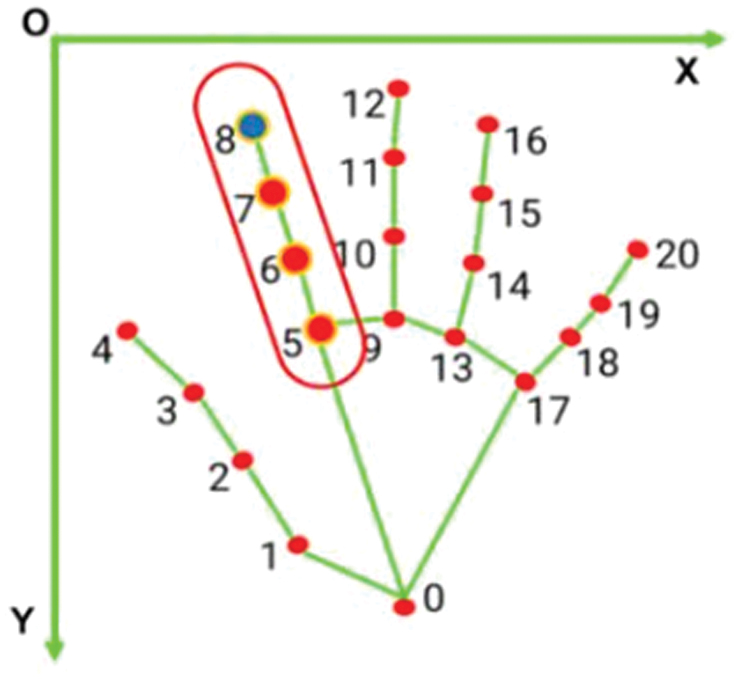

B.PROPOSED ALGORITHM FOR IDENTIFYING 21 LANDMARK POINTS ON THE HAND

The landmark points on the fingers are illustrated in Fig. 7. The input image processing consists of the following stages: (1) image rotation, (2) image resizing, and (3) normalization and color space conversion. Subsequently, a thresholding technique is applied to filter the predicted output results.

Fig. 7. Coordinates of points determining the flexion of the index finger.

Fig. 7. Coordinates of points determining the flexion of the index finger.

C.METHOD FOR NORMALIZING THE COORDINATES OF HAND POINTS IN SPACE

Received Information Package: The output HandLandMarkerResult (HLMR) contains three coordinate components (x, y, z) and the positional information of 21 key points on the hand in three-dimensional space. The x and y coordinates are normalized within the range [0.0, 1.0] according to the width and height of the corresponding image, as illustrated in Fig. 7. The z-coordinate represents the depth of each key point, with the depth at the wrist serving as the reference point. A smaller z-value indicates that the key point is closer to the camera. The z-coordinate is scaled similarly to the x-coordinate.

Coordinate Normalization: The 2D coordinate normalization follows the formula:

where- ▹x and y are the coordinates obtained from the HandLandMarkerResult package.

- ▹w,hw and hw,h represent the width and height of the image, respectively.

- ▹Px and Py are the coordinates in the two-dimensional space.

D.PROPOSAL OF A NEW ALGORITHM FOR DETERMINING FINGER FLEXION AND EXTENSION AT MULTIPLE LEVELS

After normalizing the information based on the coordinate plane Px, Py, Table I is used to determine the flexion/extension of the thumb, while Table II applies to the remaining fingers. Although multiple levels of flexion and extension can be defined, for the convenience of experimentation, the authors propose four distinct levels of flexion/extension for different fingers, as presented in Table I and Table II.

Table I. Determination of thumb flexion and extension

| Px coordinate | Finger state | Data input to array |

|---|---|---|

| Point 4 < Point 3 | Extetion | 0 |

| Point 3 < Point 4 < Point 2 | Flexion level 1 | 1 |

| Point 2 < Point 4 < Point 1 | Flexion level 2 | 2 |

| Point 4 > Point 1 | Flexion level 3 | 3 |

Table II. Determination of flexion/extension for the remaining fingers

| Py coordinate | Finger state | Data input to array |

|---|---|---|

| Point 8 < Point 7 | Extenion | 0 |

| Point 7 < Point 8 < Point 6 | Flexion level 1 | 1 |

| Point 6 < Point 8 < Point 5 | Flextion level 2 | 2 |

| Point 8 > Point 5 | Flexion level 3 | 3 |

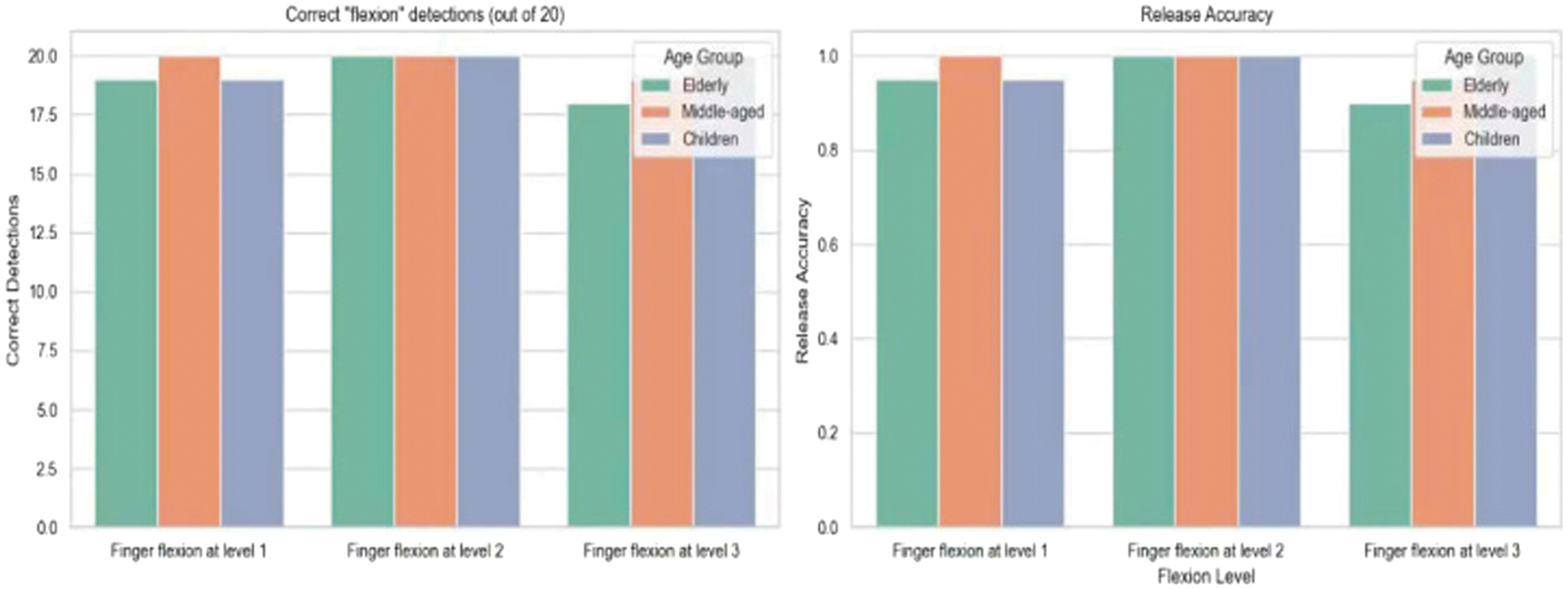

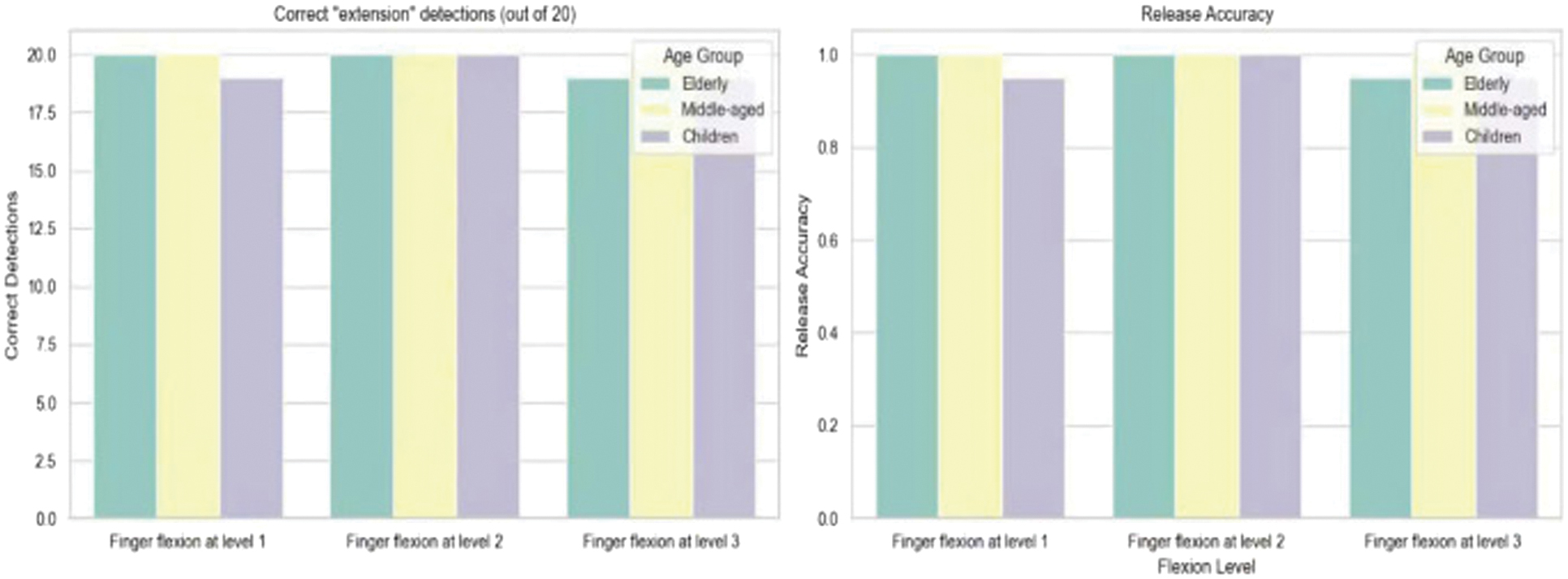

Table III. Performance table of different age groups operating the robotic glove with 20 trial repetitions

| Age group | Level | Correct “flexion” detections (out of 20) | Release accuracy | Correct “extension” detections (out of 20) | Release accuracy |

|---|---|---|---|---|---|

| Finger flexion at level 1 | 19 | 95% | 20 | 100% | |

| Finger flexion at level 2 | 20 | 100% | 20 | 100% | |

| Finger flexion at level 3 | 18 | 90% | 19 | 95% | |

| Finger flexion at level 1 | 20 | 100% | 20 | 100% | |

| Finger flexion at level 2 | 20 | 100% | 20 | 100% | |

| Finger flexion at level 3 | 19 | 95% | 20 | 100% | |

| Finger flexion at level 1 | 19 | 95% | 19 | 95% | |

| Finger flexion at level 2 | 20 | 100% | 20 | 100% | |

| Finger flexion at level 3 | 20 | 100% | 19 | 95% |

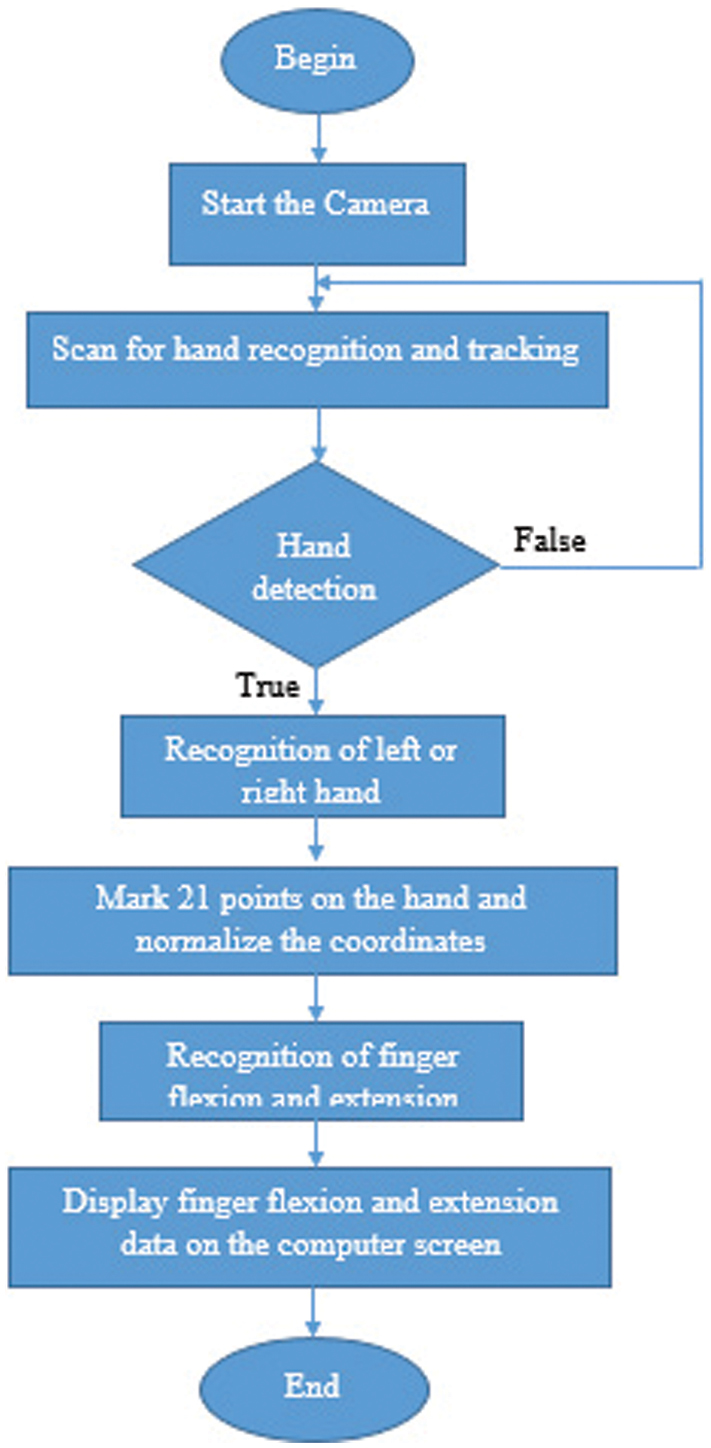

The finger control algorithm is shown in Fig. 8.

Algorithm explanation according to Fig. 8:

Fig. 8. Algorithm for recognizing finger flexion/extension at various levels.

Fig. 8. Algorithm for recognizing finger flexion/extension at various levels.

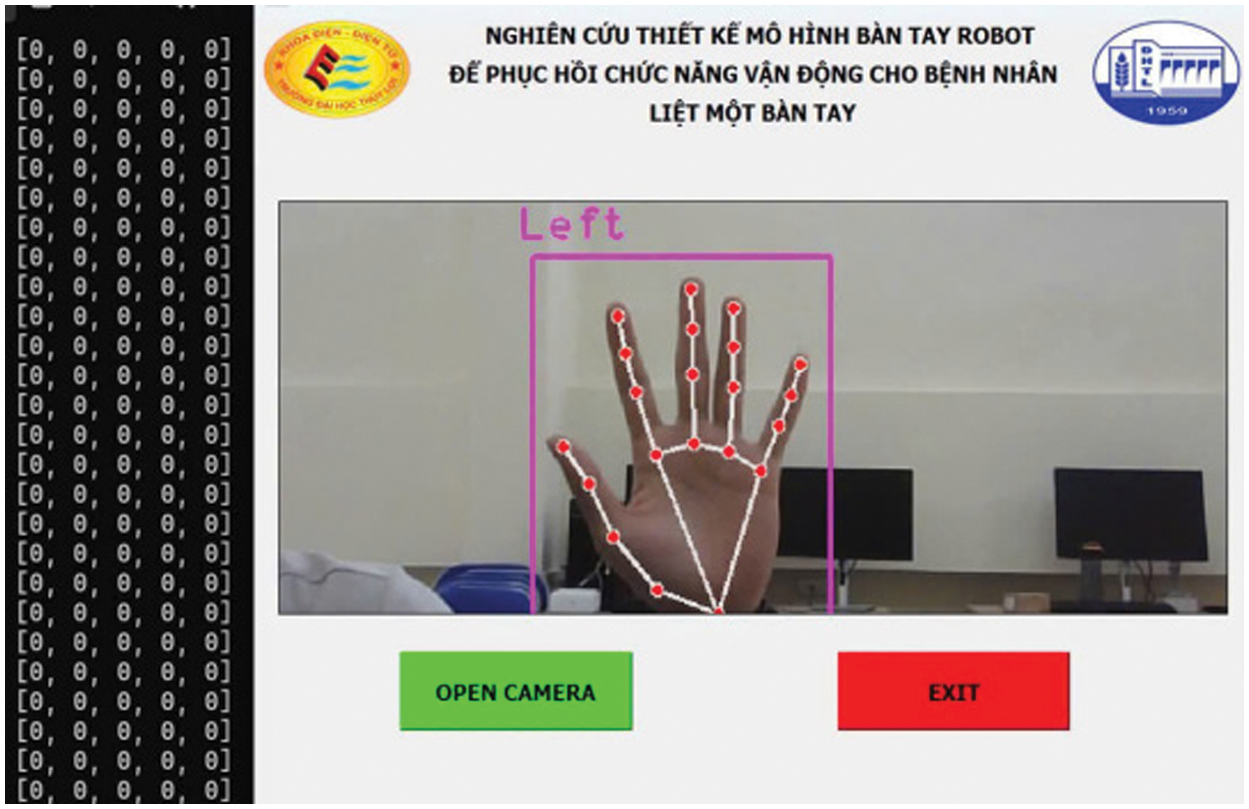

When the start button is pressed, the program initializes the camera. It then proceeds to scan and recognize the hand. If a hand is detected within the frame, the system identifies whether it is a left or right hand. Following this, 21 key points on the hand are marked, and their coordinates are normalized. The coordinate data of these 21 points is then used to determine the flexion/extension of the fingers, which is displayed on the screen. Simultaneously, the flexion/extension data of the fingers is transmitted via the USB port to the microcontroller using the Universal Asynchronous Receiver/Transmitter (UART) communication protocol, as illustrated in Fig. 9.

Fig. 9. Interface and algorithm implemented on the computer.

Fig. 9. Interface and algorithm implemented on the computer.

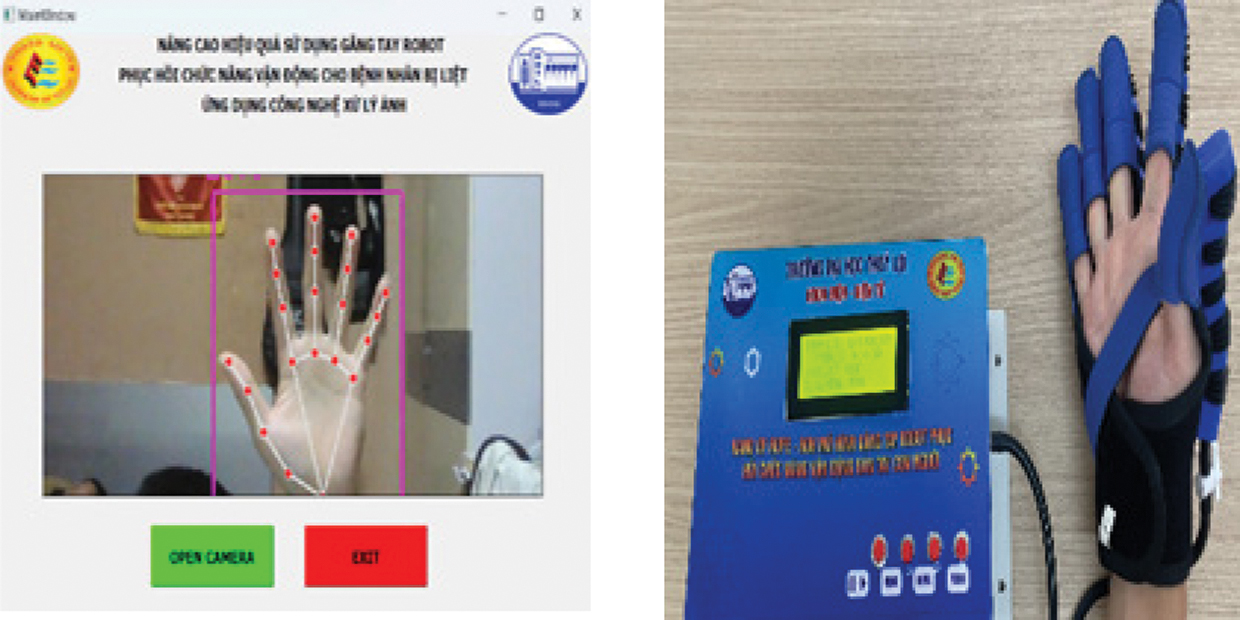

IV.EXPERIMENTAL RESULTS

The actual completed product model is shown in Fig. 10.

Fig. 10. Completed product model.

Fig. 10. Completed product model.

A.MIRROR THERAPY EXERCISE FROM THE HEALTHY HAND TO THE AFFECTED HAND

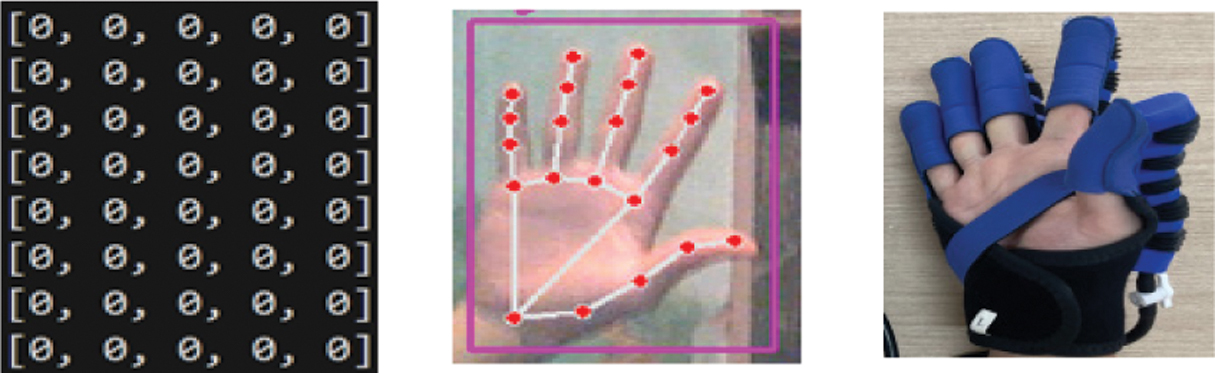

The results of finger flexion/extension recognition are presented in Fig. 11 to Fig. 14. As shown in Fig. 11, when all the intact fingers of the patient are fully extended, the MediaPipe technology identifies the key points on the intact fingers. This recognition generates a one-dimensional array of [0,0,0,0,0], which is then transmitted from the computer to the central microcontroller circuit via the UART communication protocol. Consequently, all the paralyzed fingers of the patient will also extend. This one-dimensional array is a variable, where the first element from left to right corresponds to the thumb, followed by the index finger, middle finger, ring finger, and little finger.

Fig. 11. Finger extension at level 0.

Fig. 11. Finger extension at level 0.

When the recognition result of the intact hand is at level 1, a one-dimensional array of [1,1,1,1,1] is generated and transmitted to the computer via UART. Consequently, all the paralyzed fingers will flex at level 1, as shown in Fig. 12.

Fig. 12. Finger flexion at level 1.

Fig. 12. Finger flexion at level 1.

When the recognition result of the intact hand is at level 2, a one-dimensional array of [2,2,2,2,2] is generated and transmitted to the computer via UART. Consequently, all the paralyzed fingers will flex at level 2, with the thumb remaining at level 0, as shown in Fig. 13.

Fig. 13. Finger flexion at level 2.

Fig. 13. Finger flexion at level 2.

Figure 14 illustrates the flexion at level 3 for the paralyzed fingers when mirrored from the intact hand.

Fig. 14. Finger flexion at level 3.

Fig. 14. Finger flexion at level 3.

The results of the finger flexion/extension levels have been provided in the form of a one-dimensional array with parameters matching the preset configurations. This image recognition result meets the specified requirements for rehabilitation exercises, ensuring proper flexion and extension of the paralyzed hand, as shown in Fig. 15. The patient is highly satisfied with the group’s product model.

Fig. 15. Elderly individual practicing the mirror therapy exercise from the healthy hand to the paralyzed hand.

Fig. 15. Elderly individual practicing the mirror therapy exercise from the healthy hand to the paralyzed hand.

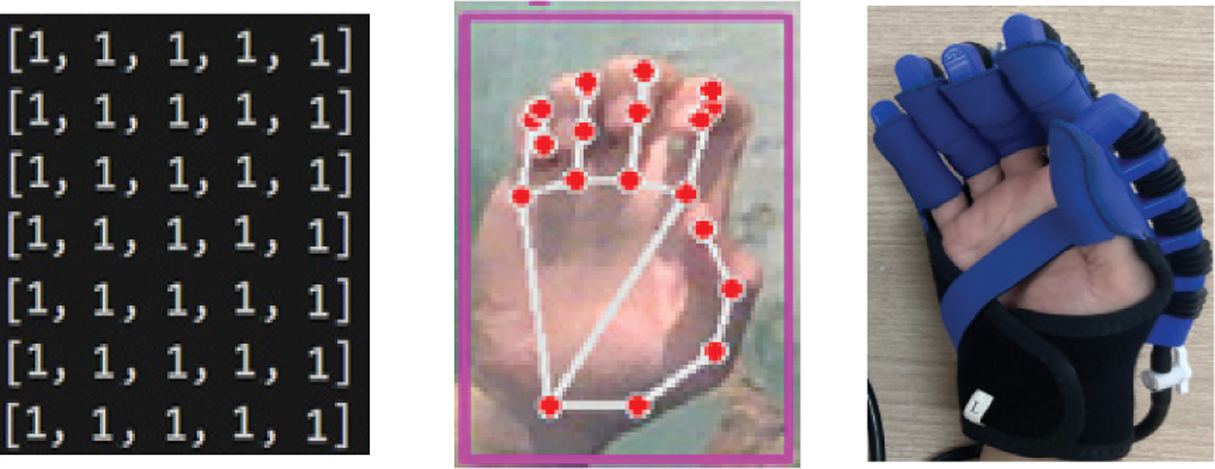

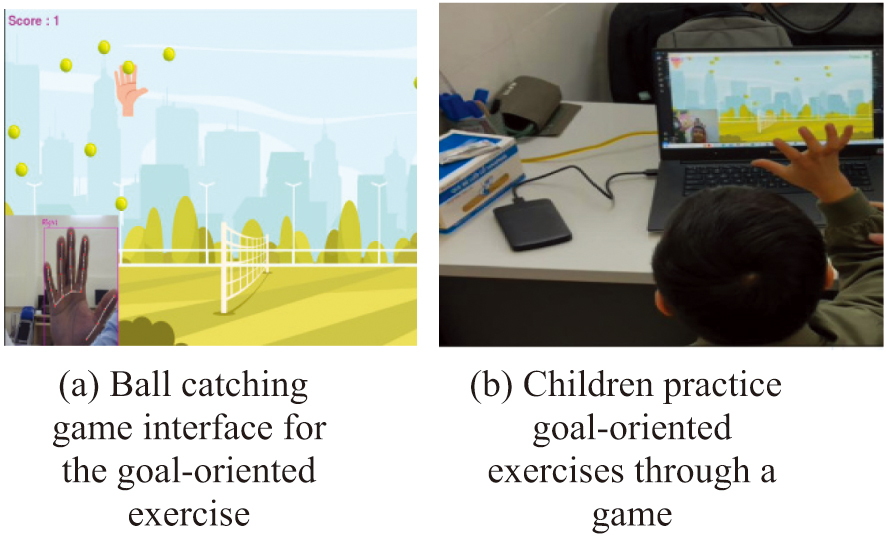

B.GOAL-ORIENTED EXERCISE THROUGH BALL-CATCHING GAME

Figure 16a shows the game interface for the goal-oriented exercise, which was independently developed by the research team using the Python programming language. This proposed method is designed for patients with one-sided paralysis who have recovered approximately 70–80% of their functionality.

Fig. 16. Mirror therapy exercise and goal-oriented exercise. a) Ball-catching game interface for the goal-oriented exercise. b) Children practice goal-oriented exercises through a game.

Fig. 16. Mirror therapy exercise and goal-oriented exercise. a) Ball-catching game interface for the goal-oriented exercise. b) Children practice goal-oriented exercises through a game.

Figure 16b presents the training results for subjects who are children. Experimental results indicate that patients are highly satisfied and enthusiastic about the authors’ product model.

The table presents data for three different age groups: children, middle-aged adults, and the elderly. Training levels are categorized into three stages: level 1, level 2, and level 3. Each level involves distinct hand activities, including the “flexion” mode (Fig. 17) and the “extension” mode (Fig. 18).

Fig. 17. Accuracy of hand flexion detection across different training levels and age groups.

Fig. 17. Accuracy of hand flexion detection across different training levels and age groups.

Fig. 18. Accuracy of hand extension detection across different training levels and age groups.

Fig. 18. Accuracy of hand extension detection across different training levels and age groups.

In 20 trials under the flexion mode across the three training levels and age groups, levels 1 and 3 yielded an accuracy rate of 95%. In contrast, level 2 achieved the highest accuracy of 100% across all age groups, as illustrated in Fig. 17 and Table III.

Similarly, for the extension mode, 20 trials were conducted across different levels and age groups. Levels 1 and 3 again showed an accuracy rate of 90%, while level 2 demonstrated the highest accuracy of 100% regardless of age group, as shown in Fig. 18.

V.CONCLUSION

This paper introduced an image processing algorithm tailored for the robotic glove system. The algorithm was implemented and verified through experimental testing on the developed prototype. The results confirmed that the algorithm met the predefined performance criteria, including accurate tracking of healthy hand movements and reliable replication of flexion and extension actions at the intended intensity levels.

Building on this, an intelligent robotic glove model featuring several innovative capabilities was proposed, significantly enhancing the rehabilitation experience for patients with hand paralysis by making the process more accessible and efficient.

Future work will focus on developing an AI-based algorithm capable of recognizing hand gestures, thereby extending the glove’s functionality to support patients not only in rehabilitation training but also in performing everyday tasks.