I.INTRODUCTION

John Dewey [1] once observed that “thinking begins at what may be called a fork in the road, an ambiguous situation.” p.11. In other words, true thinking arises from moments of uncertainty specifically when assumptions are challenged, and established paths are no longer reliable. For many educators in higher education today, this moment of disruption has arrived and higher education stands at precisely such a crossroads. The university classroom, long regarded as a space for cultivating critical inquiry and intellectual rigor, is now at the epicenter of a profound epistemological and pedagogical shift.

The rise of generative AI, especially since ChatGPT’s debut in late 2022, has intensified this shift, leaving many educators both amazed and uneasy. Large language models (LLMs) can now ace standardized tests, craft convincing academic essays, and mimic analytical reasoning. As a result, they are being integrated into various aspects of academic life: from teaching and assessment to research and administrative tasks. Such tools raise urgent questions not only about academic integrity and assessment design but also about the emotional landscape of teaching itself.

University teachers are now confronting a range of affective challenges or disruptions that are rarely discussed in formal policy or pedagogical training. Feelings of uncertainty, disorientation, professional inadequacy, and even grief are increasingly common.

A cross-national survey involving 1,754 educators revealed deep-seated concerns about AI’s impact on critical thinking in teaching. While educators recognize AI’s potential benefits, they express significant anxiety about AI impairing their ability to foster critical thinking and uphold pedagogical agency [2]. A qualitative longitudinal study with EFL teachers highlights common introspective questions such as, “If AI is a language wizard, what value do I bring as an EFL teacher?”, reflecting a perceived erosion of facilitatory roles in original thought [3].

These affective responses are not peripheral to the current crisis; rather, they are central to understanding how educators are navigating this transformative period. In a global survey of 353 educators, approximately many reported increased anxieties about reduced interpersonal interaction and difficulty sustaining meaningful learning in AI-augmented classrooms, despite acknowledging AI’s efficiency and automation benefits [4]. A review [5] applying the “five stages of grief” to AI adoption found that educators often express professional disorientation, diminished control over teaching, and a perceived loss of the human elements that define education thus leading to existential distress.

These affective jolts commonly collide with professional identity and emotional labor. Teaching already requires “managing feeling” to meet institutional and student-facing “feeling rules” (e.g., be confident, empathic, in control). When AI unsettles long-held craft knowledge (e.g., how to cultivate original writing, scaffold inquiry), the emotional labor of projecting authority and care intensifies, producing strain and role erosion. [6]. Recent faculty studies on GenAI show exactly these tensions: shifts in agency, re-calibration of roles, and divergent stances toward adoption depending on how identity and values are implicated [7].

This dynamic has been conceptualized in recent literature as GenAI-induced cognitive dissonance—where the psychological tension emerges from the mismatch between AI-enabled efficiency and an educator’s belief in originality and effort-driven learning. In a study exploring faculty perceptions [8], many instructors expressed anxiety over students using AI in ways that challenge academic integrity and authenticity. This contributes to dissonance between their role as educators committed to learning and the reality of widespread AI usage [9].

This article delves into the emotional realities of teaching in the post-ChatGPT era. Using insights from affect theory, cognitive dissonance identity theory, and assessment theory, it examines how university instructors are experiencing and responding to the rapid integration of AI into educational contexts by focusing on three research questions:

1-In what ways does the rise of AI in higher education impact faculty members’ sense of pedagogical identity and professional self-concept?

2-What affective tensions, including emotional disruption and cognitive dissonance, arise for faculty as they navigate AI-driven transformations in teaching?

3-In what ways do faculty experience assessment-related anxiety when integrating generative AI tools, such as ChatGPT, into their teaching practices?

II.LITERATURE REVIEW

The rapid integration of generative AI—particularly LLMs like ChatGPT—has sparked what scholars term a pedagogical rupture in higher education [10,11]. While much of the academic discourse has focused on the implications of AI for academic integrity, learning outcomes, and curriculum design [12,13], a growing body of literature suggests that this technological shift is also producing profound affective consequences for university educators. This review synthesizes recent empirical and theoretical research to examine how AI integration shapes faculty members’ pedagogical identity and self-concept, while also generating affective tensions such as cognitive dissonance and assessment-related anxiety.

A.IDENTITY AND PROFESSIONAL SELF-PERCEPTION

Erikson’s [14] identity theory focused on the development of a coherent sense of self and the negotiation of roles within societal contexts. Faculty identity in higher education is closely tied to pedagogical authority, assessment design, and intellectual sovereignty. These dimensions shape how faculty perceive themselves as professionals entrusted with cultivating critical thinking, creativity, and academic integrity. A study [15] emphasized that teachers’ professional identities are shaped by their roles as knowledge providers and assessors. The introduction of AI tools that can perform these tasks has led to concerns about diminished professional relevance.

Recent empirical studies illustrate growing concern among instructors regarding generative AI’s potential impact on their professional identity. For example, a mixed-methods study [16] with 178 faculty members at a US university found that although instructors generally recognized the utility of generative AI, many expressed anxiety that AI might erode their distinct human-centered teaching capacities—such as instructional nuance and critical responsiveness—even if they were not outright replaced.

Similarly, [17] surveyed faculty across 76 countries and highlighted tensions between perceived pedagogical benefits and ethical unease; instructors acknowledged GenAI’s efficiency but worried it could dilute pedagogical ownership and undermine their sense of authorship. Studies using technology acceptance frameworks further contextualize these identity concerns. By using a UTAUT2 model, [18] report that although performance expectancy and ease of use predicted intentions to adopt AI tools, policy mandates and the need to redesign teaching practices significantly increased stress and undermined faculty confidence in their professional efficacy. In parallel, education scholars have shown that anxiety over changing disciplinary norms and assessment methods can heighten faculty’s sense of professional vulnerability, even where job security is stable [9].

Collectively, these recent studies reveal a dual dynamic faculty view generative AI as a potentially supportive tool, yet simultaneously perceive it as a force that may destabilize traditional dimensions of pedagogical authority and intellectual autonomy. These tensions are manifested in emotional experiences such as professional apprehension, diminished sense of ownership over curriculum, and ethical uncertainty. This underscores the importance of exploring how faculty negotiate their professional self-concept amidst AI-induced pedagogical shifts.

B.EMOTIONAL RESPONSES TO AI INTEGRATION

Affect theory emphasizes the pre-conscious, embodied responses individuals have to change, highlighting subtle shifts in emotional experience [19]. In the context of higher education, the introduction of generative AI tools has elicited a range of emotional reactions from teachers. A Spanish study titled “Are University Teachers Ready for Generative Artificial Intelligence? Unpacking Faculty Anxiety in the ChatGPT Era” [20] examines specific sources of faculty anxiety related to AI adoption. It identifies three primary anxiety domains: concern about student misuse (e.g., plagiarism), fear of inaccurate AI-generated content, and anxiety over professional role change. The findings reveal that anxiety about student misuse and AI inaccuracies significantly reduces teachers’ intention to adopt AI tools, mediated by lower performance expectancy. However, anxiety pertaining to broader professional change (e.g., the devaluation of teaching roles) did not significantly affect adoption intention—likely due to high job security in Spanish public universities. Similarly, [21] observed that educators reported feelings of inadequacy and fear of obsolescence as AI began to encroach upon traditional teaching roles. These emotional responses underscore the relevance of affect theory in understanding how teachers process and react to technological disruptions in their professional environments.

These affective tensions are exacerbated by the speed at which AI technologies are being integrated into university systems, often with little consultation or pedagogical support. Emotions are not private states but are socially and institutionally mediated [22]. Instructors’ anxieties about AI are shaped by broader narratives of progress, efficiency, and innovation—discourses that frequently sideline the emotional and relational dimensions of teaching [23]. As such, the growing emphasis on “digital transformation” in education risks further alienating teachers from their core pedagogical values and practices [10].

C.COGNITIVE DISSONANCE IN TEACHING PRACTICES

Cognitive dissonance theory [24] posits that individuals experience psychological discomfort when confronted with conflicting beliefs or behaviors, motivating them to resolve the inconsistency. In the realm of higher education, the rise of AI presents such conflicts for educators. A study [25] highlighted that teachers experienced cognitive dissonance when their established pedagogical beliefs were challenged by the capabilities of AI, leading to a reevaluation of their teaching methods. Similarly, [3] reported that educators struggled to reconcile their traditional assessment practices with the ease of AI-generated student work, prompting a reassessment of academic integrity standards.

Conceptual study [8] introduced the notion of GenAI-induced cognitive dissonance, where faculty and students experience psychological tension when using AI tools that conflict with their core pedagogical values like originality, academic effort, and intellectual ownership. The study explores how reliance on AI for writing, feedback, or lesson design can trigger dissonance between professional identity beliefs and pragmatic efficiencies. It recommends strategies such as reflective pedagogy, transparent AI use, and task redesign to mitigate this dissonance. The framework explicitly connects cognitive dissonance with emotional disruption that manifest as discomfort, ambivalence, or resistance among educators adapting to AI integration. These instances illustrate how cognitive dissonance theory can elucidate the internal conflicts faced by educators as they navigate the integration of AI into their teaching practices.

D.ASSESSMENT CHALLENGES IN THE AI ERA

Assessment theory [26] conceptualize assessment as a central element of the learning process, shaping both instructional practices and student outcomes. In the context of emerging AI technologies, this framework underscores the urgency of rethinking conventional assessment practices in higher education. Their work suggests that traditional approaches often fail to capture the complex forms of learning fostered by AI tools, thereby strengthening the case for adopting more authentic and formative assessment strategies.

A study [27] highlighted that teachers are concerned about the authenticity of student work when AI-generated content is prevalent, leading to challenges in maintaining academic integrity. These concerns align with Boud and Falchikov’s assertion that assessment practices must evolve to remain relevant and effective in the face of technological advancements.

A study [28] surveyed 358 university teachers across the Middle East, exploring their views on using GenAI for student assessment. While many faculty adopted AI tools to streamline grading and feedback, the study revealed deep concerns about academic integrity, fairness, and the erosion of disciplinary judgment. Distrust in AI-mediated assessment arose particularly when institutional policies were unclear or ill-defined, exacerbating anxiety about delegating evaluative authority (performance expectancy, effort expectancy). Faculty underscored the need for ethical guidelines and professional development to mitigate these affective tensions.

In a mixed-methods study [3] of 178 US-based instructors, Lyu et al. highlight that faculty often report simultaneous trust and distrust toward GenAI: they recognize its potential but also fear misrepresentation, hallucination, and over automation of assessment tasks. This ambivalence fuels anxiety around the reliability of AI-driven graded feedback and raises concerns about losing control over evaluative criteria. Their qualitative analysis illustrates how assessment distrust contributes to emotional disruption, prompting some instructors to limit AI usage or modify assessment design strategies.

A survey [29] of faculty and students in Vietnam and Singapore found persistent skepticism toward AI-generated feedback, particularly regarding grading reliability and fairness. While AI support was generally accepted when paired with human oversight, fully automated grading tools were met with distrust. The study further highlighted assessment-related anxieties tied to unclear policies, opaque detection systems, and fears of misgrading or bias, pointing to the importance of institutional frameworks for building confidence in AI-assisted assessment.

Across these studies, a consistent pattern emerges: educators experience emotional disruption when AI integration forces a reevaluation of trusted pedagogical practices and professional roles. Cognitive dissonance is especially salient, as faculty navigate the tension between their educational values and the technological affordances of immediate efficiency or automation. Finally, assessment-related anxiety surfaces strongly in contexts where AI is used for grading or feedback without institutional clarity, raising concerns about fairness and academic integrity.

E.GAPS AND CONTRIBUTION

Current research on faculty experiences with GenAI—mostly quantitative or mixed-methods—focuses on their intentions, satisfaction, and perceived usefulness. However, few studies delve into the deeper emotional and identity-related aspects. We don’t have much qualitative insight into how faculty truly feel—the frustration of seeing their teaching identity shift, the stress over grading and assessment, or the internal conflict between their educational values and the pressure to work more efficiently. Additionally, most studies don’t incorporate frameworks like emotional labor or affect theory to make sense of these experiences.

In short, this research highlights AI’s expanding role in higher education and the mixed feelings faculty have about it, where hope clashes with emotional unease. While existing studies acknowledge this tension, they often neglect the deeper human dimensions, as few explicitly draw on emotion-centered theories—such as affect theory, or identity theory—to examine how faculty experience AI integration in the classroom.

III.METHODOLOGY

A.RESEARCH DESIGN

This study adopts a qualitative exploratory design to investigate faculty members’ affective barriers to adopting AI in higher education. This approach is suitable for exploring complex emotional and psychological responses that may not be fully captured through quantitative. Semi-structured interviews were conducted to understand how university educators emotionally and cognitively navigate the rise of generative AI in their teaching practice. Each session lasted approximately 35 minutes and was recorded with participants’ consent. Thematic analysis was used to code transcripts, and emerging patterns were reviewed iteratively.

B.PARTICIPANTS

The participants for this study were recruited through the official WhatsApp alumni group of Saint Joseph University of Beirut (USJ). An invitation message describing the purpose of the study and the voluntary nature of participation was sent to all 364 members of the group. The message included information about the study topic, confidentiality procedures, and an invitation to express interest in joining an online interview (Appendix A).

A total of 55 members responded to the initial invitation, and each was screened based on predefined inclusion and exclusion criteria. The inclusion criteria required that participants be currently teaching in a higher education institution, regardless of discipline, contract type, or geographic location. The exclusion criteria eliminated retired instructors.

After screening the 55 volunteers, 20 participants met the inclusion criteria and were invited to take part in the study. The sample size of 20 participants was deemed appropriate for achieving thematic saturation in qualitative research. All participants were actively teaching at either the undergraduate or postgraduate level. Fourteen out of the 20 participants were actively teaching in Lebanon and 6 in the gulf region. Those who agreed received a unique Zoom meeting link for scheduling the interview. All interviews were conducted online, recorded with participant consent, and subsequently transcribed. For consistency in analysis, interviews originally conducted in Arabic or French were translated into English prior to coding and thematic analysis.

The final sample represented a diverse group of university instructors from different disciplines, academic ranks, and teaching contexts (Table I). This diversity enhanced the richness of the data and allowed for a more comprehensive understanding of faculty affective barriers to AI adoption in higher education.

Table I. Participants’ codes and disciplines

| Participant/code | Discipline |

|---|---|

| 1 | Law |

| 2 | Philosophy |

| 3 | Philosophy |

| 4 | Humanities |

| 5 | Education |

| 6 | English Literature |

| 7 | Media Studies |

| 8 | Political science |

| 9 | Computer Science Sociology |

| 10 | Law |

| 11 | Education |

| 12 | Business |

| 13 | Cultural Studies |

| 14 | History |

| 15 | Sociology |

| 16 | English Literature |

| 17 | Communication |

| 18 | Psychology |

| 19 | Political Studies |

| 20 | English Language and Cultural Studies |

Table II. NVivo-style thematic coding framework

| Theme | Subtheme | Example codes |

|---|---|---|

| Loss of Professional Purpose | “What’s my role now?” | |

| Conflicted Emotions Toward AI | “I’m both fascinated and terrified.” | |

| Fear of Cheating & Surveillance Pressure | “How do I even know they wrote this?” |

Table III. Samples of teachers’ dissonance and conflicting cognition

| Situation | Conflicting cognitions | Dissonance reaction |

|---|---|---|

| Using ChatGPT to save time | “AI undermines learning” vs. “It’s efficient for admin tasks” | Guilt, self-justification |

| Suspecting student use of AI | “I value student trust” vs. “I think they’re cheating” | Disillusionment or stricter policies |

| Institutional AI enthusiasm | “I believe in human-centered teaching” vs. “My university is pushing AI adoption” | Emotional conflict, resistance |

Table IV. Assessment distrust linked to assessment theory of Boud & Falchikov

| Challenge | Link to Boud & Falchikov |

|---|---|

| ChatGPT generating essays | Undermines traditional summative tasks; shows need for authentic, process-oriented assessment |

| Teacher loss of evaluative role | Calls for shifting to student self-assessment and peer assessment to build autonomy |

| AI as shortcut vs. learning tool | Aligns with their critique of superficial outcome-driven assessment; highlights the urgency of rethinking assessment purpose |

Table V. Relating fear of cheating and surveillance to Foucauldian theory

| Teachers checking for AI use | Faculty take on the role of disciplinary agents within a broader surveillance system. |

| Students avoiding detection by masking AI use | Students internalize the gaze and modify behavior based on fear of surveillance. |

| Software like Turnitin or AI detection tools | These tools represent automated panopticons—mechanisms of control that create a culture of suspicion. |

| Institutional policies on academic integrity | These function as |

Table VI. Summary of affective patterns

| Theme | Dominant emotions | Reported impact |

|---|---|---|

| Erosion of identity | Loss, disorientation, fear | Weakened self-efficacy |

| Emotional disruption | Ambivalence, anxiety | Psychological fatigue |

| Assessment anxiety | Distrust, frustration | Shifts toward defensive pedagogy |

C.DATA COLLECTION AND ANALYSIS

Data were collected through semi-structured interviews conducted via Zoom or in-person between March and June 2025 and ranged from 20 to 35 minutes. Questions (Appendix B) focused on:

- 1.Erosion of Pedagogical Identity

- 2.Emotional Disruption and Cognitive Dissonance

- 3.Assessment Anxiety and Distrust

Interviews recorded per consent of participants were transcribed and analyzed using thematic analysis [5]. It follows six-phase framework: (1) familiarization with data, (2) generation of initial codes, (3) searching for themes, (4) reviewing themes, (5) defining and naming themes, and (6) producing the report.

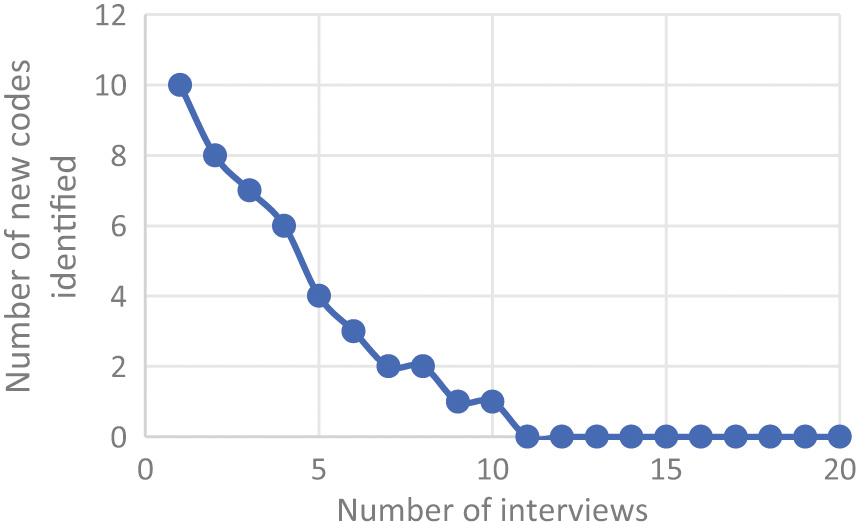

Thematic saturation was monitored continuously throughout the analysis of faculty interviews on affective barriers to AI adoption in higher education. Each interview was transcribed and coded immediately, and a saturation tracking table was used to record the emergence of new codes related to emotional, psychological, and attitudinal barriers toward AI. During the first 12 interviews, core themes—such as anxiety about AI replacing pedagogical roles, lack of confidence in using AI tools, fear of losing academic integrity, and uncertainty about institutional expectations—were identified. After interview 13, no new themes emerged, indicating that theme saturation had been reached. The remaining interviews (14–20) provided additional depth, nuance, and variation within the established affective barriers, contributing to meaning saturation (Fig. 1). This approach follows the recommendations of [30,31] who distinguish between theme saturation (no new themes) and meaning saturation (full understanding of theme complexity). Therefore, the final sample of 20 interviews was judged sufficient to capture both the breadth and depth of faculty affective barriers to adopting AI in higher education.

NVivo 14 software was used to assist with data organization and coding NVivo is a qualitative data analysis (QDA) software used to organize, code, and analyze unstructured data in this place we mean interviews. Thematic coding in NVivo which refers to the process of systematically identifying, categorizing, and interpreting recurring themes (patterns of meaning) in data was used.

Initial codes were generated inductively from the transcripts and then grouped into themes reflecting emotional and psychological barriers. Each theme was grounded in multiple participant excerpts and validated through both inter-coder agreement and member checking. Interviews were audio-recorded, transcribed by turbo scribe application, and anonymized. Inter-coder agreement was established by a second researcher independently coding 20% of the transcripts, with a 91% agreement rate after discussion. (Appendix C).

Reflexive journaling was used to monitor researcher subjectivity, and member checking was conducted with six participants to validate key themes (Appendix D).

Reliability was ensured through:

- •Inter-coder agreement (a second coder will analyze 20% of transcripts)

- •Reflexive journaling by the researcher

- •Member checking to validate interpretations

IV.THEMATIC ANALYSIS OF FINDINGS

This section presents detailed findings and analysis of university professors’ affective responses to the introduction of ChatGPT and other LLMs, organized into three overarching themes developed through thematic analysis [5]. These themes reflect three major domains:

- 1.Erosion of Pedagogical Identity

- 2.Emotional Disruption and Cognitive Dissonance

- 3.Assessment Anxiety and Distrust

A.THEME 1: EROSION OF PEDAGOGICAL IDENTITY

Many participants expressed a sense of diminishing authority and confidence in their pedagogical roles. For example, one literature professor noted, ““I used to feel like the source of knowledge. Now I feel redundant.” (Participant 20). Others voiced concern that AI tools undermine the intellectual labor that teaching seeks to cultivate. This erosion of confidence often manifested in self-doubt, with several participants questioning whether their assessments or teaching strategies were still “meaningful.” Participants described feeling emotionally depleted by the constant need to reinterpret their role. The rapid pace of AI developments, combined with inconsistent institutional guidance, led to a sense of pedagogical whiplash. A history professor shared, “Every month it feels like the rules have changed again. What am I even assessing now—knowledge, creativity, or prompt engineering?” This ambiguity left many teachers feeling isolated and unsupported.

The most frequently coded theme reflected a deep sense of ontological disruption among faculty members. Many described the sudden emergence of LLMs as not just a technical challenge but a threat to their professional identity. Participants spoke of feeling “displaced,” “less essential,” or even “substitutable” in the wake of AI’s ability to generate coherent, sometimes excellent responses to student assignments.

Two core subthemes emerged:

- •Loss of Professional Purpose: Teachers reported struggling to define their role when students can bypass critical thinking tasks with a prompt.

- •Shift from Expert to Facilitator: Many reflected on a reconfiguration of their identity—from knowledge transmitters to moderators of process or ethics.

This theme intersects with identity theory [3] and role strain theory [32], suggesting that faculty are navigating competing role demands between educator, evaluator, and now, AI ethicist. Erikson’s identity theory [3] frames identity as a lifelong, dynamic process shaped by psychosocial challenges. In times of major disruption, like the rise of AI in education, individuals may experience identity crises, prompting re-evaluation of their roles, values, and sense of purpose. This theory is highly applicable to the emotional and psychological challenges university teachers face today. For example: “If I’m no longer the expert in the room, in other words, if AI can ‘teach’ content then what’s my role? That’s an identity question, not just a pedagogical one.”

As for role strain theory [32], emotional and cognitive stress occurs when expectations within a single role are too demanding or contradictory. It explains emotional disruption, burnout, and resistance among educators by revealing:

- •Why many feel overwhelmed despite being committed to their profession.

- •How changing technologies introduce intra-role conflict (within the role of ‘teacher’).

- •The psychological cost of institutional expectations that may not align with everyday realities.

B.THEME 2: EMOTIONAL DISRUPTION AND COGNITIVE DISSONANCE

This theme captured the emotional ambivalence and internal dissonance many faculty members experience in response to LLMs. While a few embraced the “potential” of generative AI as a tool, the majority described a disorienting mix of fascination, fear, skepticism, and guilt. This tension produced what several participants described as “ethical fatigue,” wherein educators felt torn between institutional pressures to innovate and their own professional values “It’s exciting, but I also wake up at night worrying what this means for my job.” (Participant 12)

Two primary subthemes were identified:

- •Conflicted Emotions Toward AI: Faculty found themselves intellectually intrigued but emotionally destabilized.

- •Disorientation and Unease: A majority expressed feeling like they were operating without a “moral compass” or “instruction manual.”

These emotional responses resonate with cognitive dissonance theory [24], wherein teachers’ long-standing pedagogical values now clash with a new technological reality. Cognitive dissonance theory is a foundational psychological theory that explains the emotional and mental discomfort people feel when they hold two or more contradictory beliefs, values, or attitudes, or when their behavior is inconsistent with their beliefs. This discomfort—called dissonance—motivates people to restore consistency, often by changing their beliefs, justifying their behavior, or minimizing the conflict. I use ChatGPT to help with marking rubrics, but I still feel uneasy when students use it for essays. It’s like I’m part of the problem I’m trying to avoid.” (Participant 16).

C.THEME 3: ASSESSMENT ANXIETY AND DISTRUST

This theme revealed a deep distrust of traditional assessment methods and anxiety about authentic student engagement. Instructors voiced concern that LLMs compromise the integrity of student work, making it difficult to distinguish genuine learning from algorithmic mimicry. “I can’t tell anymore if students actually understand the content or just prompt ChatGPT well.” (Participant 03)

Subthemes included:

- •Fear of Cheating and Surveillance Pressure: Some faculty began contemplating more invasive surveillance tools or oral exams.

- •Devaluation of Academic Work: Others reported a demoralizing sense that “essays no longer matter” or that “grading feels futile.”

This theme aligns with assessment theory [26] and connects with surveillance culture [33], as teachers feel both complicit in and victimized by a system that pushes them toward punitive monitoring rather than trust-based pedagogy. Assessment theory [26] focuses on rethinking the role of assessment in higher education, shifting from a teacher-centered, summative model toward a student-centered, learning-oriented approach. Their work is central to contemporary debates about assessment for learning, particularly in light of challenges posed by digital technologies like AI.

In addition, surveillance culture [33] states that The Birth of the Prison refers to a societal condition in which individuals regulate their own behavior because they are aware they might be watched. It’s not just about being surveilled—it’s about internalizing that surveillance. The following theory is closely reflected by several participants. For example, Grading used to be about assessing understanding. Now it feels like a constant investigation. I’m Googling phrases, checking metadata, looking for signs of AI. It’s draining.” (Participant 14, History)

The findings reveal profound emotional turbulence among faculty as they navigate AI integration, with three dominant themes shaping their affective experiences. First, the erosion of professional identity evokes loss, disorientation, and fear, as educators grapple with a destabilized sense of purpose. This emotional strain directly undermines their self-efficacy, leaving some questioning their role in an AI-augmented classroom. Second, emotional disruption manifests as ambivalence and anxiety, reflecting the tension between recognizing AI’s potential and fearing its implications. This unresolved conflict contributes to psychological fatigue, as faculty expend mental energy reconciling competing emotions. Finally, assessment anxiety—driven by distrust and frustration toward AI’s reliability—triggers a shift toward defensive pedagogy, where educators adopt rigid or overly controlled teaching practices to reassert authority. Collectively, these affective responses highlight a critical gap in AI implementation strategies: while technical training is prioritized, the emotional labor of adaptation remains unaddressed, risking burnout and resistance.

V.DISCUSSION

Our findings underscored the complex emotional terrain of university teaching in the age of AI. Rather than viewing technological disruption as a purely cognitive or structural challenge, this study revealed the deeply affective nature of adaptation. The erosion of pedagogical confidence, ethical dissonance, and emotional fatigue were not isolated effects but systemic consequences of institutions that prioritized innovation over relational pedagogy.

While acknowledging the impressive capabilities of ChatGPT, many expressed ethical discomfort. A creative writing instructor shared, “On one hand, I want students to use the tools of the future. On the other, I feel complicit in devaluing the skills I’m supposed to be teaching.” This tension gave rise to what several participants described as ethical fatigue—a state of being torn between institutional pressures to innovate and a deep commitment to professional and disciplinary values. This “ethical fatigue” emerged when teachers, as moral agents [34], are forced to reconcile AI’s efficiencies with perceived threats to their disciplinary ethos. The history professor’s lament “What am I even assessing now?” reflects a loss of professional epistemic control [35], exacerbated by what participants described as reactive, top-down AI policies.

Participants described feeling emotionally depleted by the constant need to reinterpret their role. The rapid pace of AI developments, combined with inconsistent institutional guidance, led to a sense of pedagogical whiplash. This ambiguity left many teachers feeling isolated and unsupported, rather than framing technological disruption as a purely cognitive or structural challenge. This study revealed the deeply affective nature of adaptation. The creative writing instructor’s dilemma “complicit in devaluing the skills I’m supposed to be teaching” epitomizeed what would identify as emotional dissonance: the strain of performing institutional optimism while privately grappling with value conflicts [6].

Despite the emotional strain, several educators expressed tentative optimism. A few spoke about reimagining assessment practices, designing AI-aware pedagogy, or co-creating classroom norms with students. “Maybe this is a chance,” one participant said, “to rethink what learning is really for.” These narratives of hope were often tempered by caution, reflecting “cruel optimism” [36].

These findings also resonated with [37] call to reclaim the affective space in higher education and with theory of emotional labor [11]. Teachers in principle are not only responsible for content delivery but also for the emotional regulation required to sustain professional identity amid uncertainty. Importantly, the guarded hope expressed by some participants suggests that affect can be a generative force—fueling creativity, experimentation, and renewed ethical engagement. Together, these findings underscored the need for institutions to take a more holistic approach not just providing AI infrastructure but also addressing the emotional and subjective experiences of faculty. Professional development, clear policy guidance, and open forums for discussing ethical-identity tensions would be essential to mitigate emotional disruption and sustain faculty engagement in AI-mediated educational environments

VI.RECOMMENDATIONS FOR SUSTAINABLE AI INTEGRATION IN HIGHER EDUCATION

The findings of this study revealed that the integration of generative AI into higher education triggered significant affective challenges among faculty members, including erosion of pedagogical identity, emotional disruption, and assessment anxiety. These emotional responses highlighted the need for institutions to develop comprehensive support systems that would address both the technological and human dimensions of AI adoption. Drawing on the study’s results and supported by scholarship in affect theory [38], we propose the following recommendations for sustainable AI integration.

First, institutions must prioritize psychological and professional support for faculty navigating AI-induced disruptions. This should include targeted training programs that help educators manage the emotional labor of teaching in an AI-mediated environment [6]. Workshops grounded in critical pedagogy [39] can help faculty navigate the ethical and emotional challenges posed by these technologies.

Second, policy development around AI implementation must be collaborative and faculty-centered where faculty can share experiences and coping strategies. Research on organizational change [40] demonstrates that inclusive decision-making processes lead to more successful adoption of innovations. Institutions should establish cross-disciplinary faculty task forces to co-create AI guidelines, with transparent communication channels to mitigate distrust.

Third, assessment practices need reimagining to rebuild trust between faculty and students. Moving toward authentic assessment models [41] that emphasize on process over product could reduce anxiety about academic integrity while maintaining pedagogical rigor. Any use of AI detection tools should be accompanied by clear communication about their limitations to prevent the erosion of faculty–student relationships.

Fourth, it is important to note that all faculty members interviewed in this study shared the same national identity—Lebanese. This homogeneity represents a limitation and highlights the need for future research that examines how instructors’ national backgrounds, as well as cross-disciplinary differences, may influence attitudes toward adopting AI in higher education.

Finally, institutions should invest in longitudinal research to monitor how AI adoption impacts faculty well-being over time. This data should inform iterative improvements to support systems and policies. The author in [42] argues in his work on emotional geographies in education that sustainable technological integration requires careful attention to the affective ecologies of learning environments. By adopting these recommendations, higher education institutions can develop human-centered approaches to AI that support both pedagogical innovation and faculty well-being.

VII.CONCLUSION

The rise of generative AI in higher education had created not only technical and pedagogical challenges but also profound affective disturbances.

In short, this research highlighted AI’s expanding role in higher education and the mixed feelings faculty had about it, where hope clashed with emotional unease. While existing studies revealed this tension, they often overlooked the deeper human dimensions: few used emotion-centered theories, examined the hidden toll of emotional labor, or explored how faculty see themselves in this changing landscape. This study filled those gaps by listening closely to educators—through in-depth interviews—and focusing on their struggles: the erosion of their teaching identity, the stress of balancing values with efficiency, and the anxiety around AI’s impact on assessment. By centering emotion and lived experience, this work did not just analyze AI adoption; it called for policies that recognize and care about how these changes truly felt for faculty. Understanding how teachers were feeling would justify why designing pedagogical strategies and institutional responses that were not only technologically robust but also emotionally and ethically sound could be essential. Future research must attend to these affective undercurrents, acknowledging that teaching was not merely an act of knowledge transmission, but a deeply embodied, relational, and affectively charged practice.

By focusing on how AI impacted pedagogical identity, generated affective tensions, and elicited emotional responses, this study contributed to a more holistic understanding of what it means to teach in an AI-mediated academic environment. It responded to a pressing need to design institutional strategies that attended not only to technological infrastructure but also to the emotional and professional well-being of educators. John Dewey once suggested that in times of uncertainty, we need to “climb a tree” to gain a clearer perspective. We were at a fork in the road that would determine the future of higher education. His point reminded us that as we rethink and reimagine higher education, and we may need to take a higher perspective to look at the present. Each of us should take on this responsibility, and perhaps we could climb the tree ourselves and gain insight into the future without hiring a tree-moving service.